📉 Cross Entropy Visualizer

🎯 True Labels (One-hot)

🔮 Predicted Probabilities

📘 What Is Cross Entropy?

Cross-entropy is a key loss function used during training of classification models. It compares the predicted probability distribution to the true label (usually one-hot encoded).

Cross-entropy is a loss function that tells us how close our predicted probability distribution is to the actual distribution (true labels).

Used in classification problems, especially with softmax outputs in neural networks.

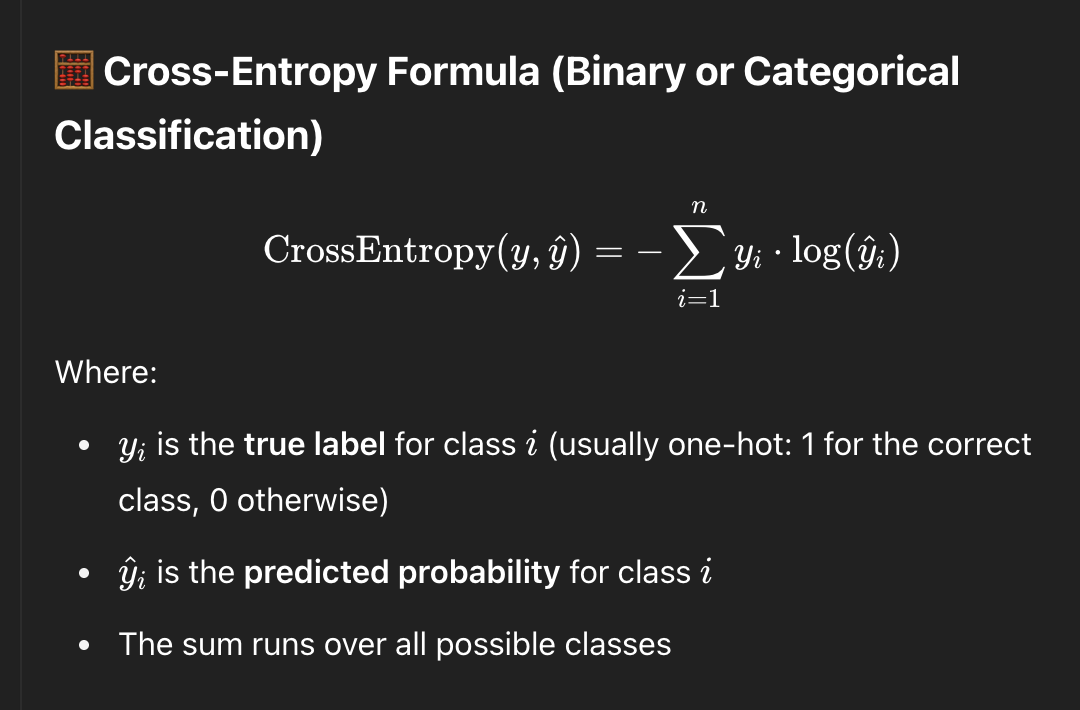

🧠 Formula:

In words: For each class, we multiply the true label (1 or 0) by the log of the predicted probability, sum them up, and negate the result.

🔄 Relationship Between Softmax & Cross-Entropy

- Softmax converts logits (raw model outputs) into probabilities.

- Cross-entropy then compares those probabilities to the true labels.

- Together, softmax + cross-entropy measure how well the model is predicting the correct class.

- Frameworks like PyTorch combine them internally in functions like

CrossEntropyLoss(which applies softmax automatically).

⚙️ When Is Cross-Entropy Used?

- During training: Softmax + Cross-Entropy are applied at the final output layer.

- During inference: Only softmax is used to get class probabilities. No loss is calculated — we already have a trained model.

🧱 Common Architecture Pattern

Input → [Hidden Layers] → Final Linear Layer → Softmax → Cross-Entropy Loss

(Softmax + Cross-Entropy used only during training)

📎 Special Cases

- Auxiliary Losses: Some networks like Inception or DeepLab apply additional cross-entropy losses at intermediate layers to help with training.

- Multi-Task Learning: Multiple outputs may have different loss functions applied.

Summary:

✅ During training, use Softmax + Cross-Entropy at the output layer

✅ During inference, use Softmax only — no loss is calculated

✅ PyTorch and TensorFlow often handle softmax + cross-entropy internally for numerical stability

✅ During training, use Softmax + Cross-Entropy at the output layer

✅ During inference, use Softmax only — no loss is calculated

✅ PyTorch and TensorFlow often handle softmax + cross-entropy internally for numerical stability